The EarthScope-operated data systems of the NSF National Geophysical Facility are migrating to cloud services. To learn more about this effort and find resources, visit earthscope.org/data/cloud

As we build out cloud data systems, we want to help novices leverage them along with pros. One of EarthScope’s initial interfaces to help investigators explore cloud computing with NSF NGF data will be an interactive notebook computing platform. So if you aren’t yet familiar with notebooks, here’s a primer on what they are — and why many in science find them useful.

What’s a notebook?

Notebooks are an alternative way to write and run code that looks more like embedding code in an interactive document. The concept can be implemented in different forms (see our Javascript data access notebooks, for example), but we’ll focus here on Jupyter notebooks.

These notebooks can run code in a number of languages, like Python, R, or Julia, for some examples. The kernel process that executes code can run on your local machine, but it can also run on a server, allowing users to operate the notebook with nothing but a web browser.

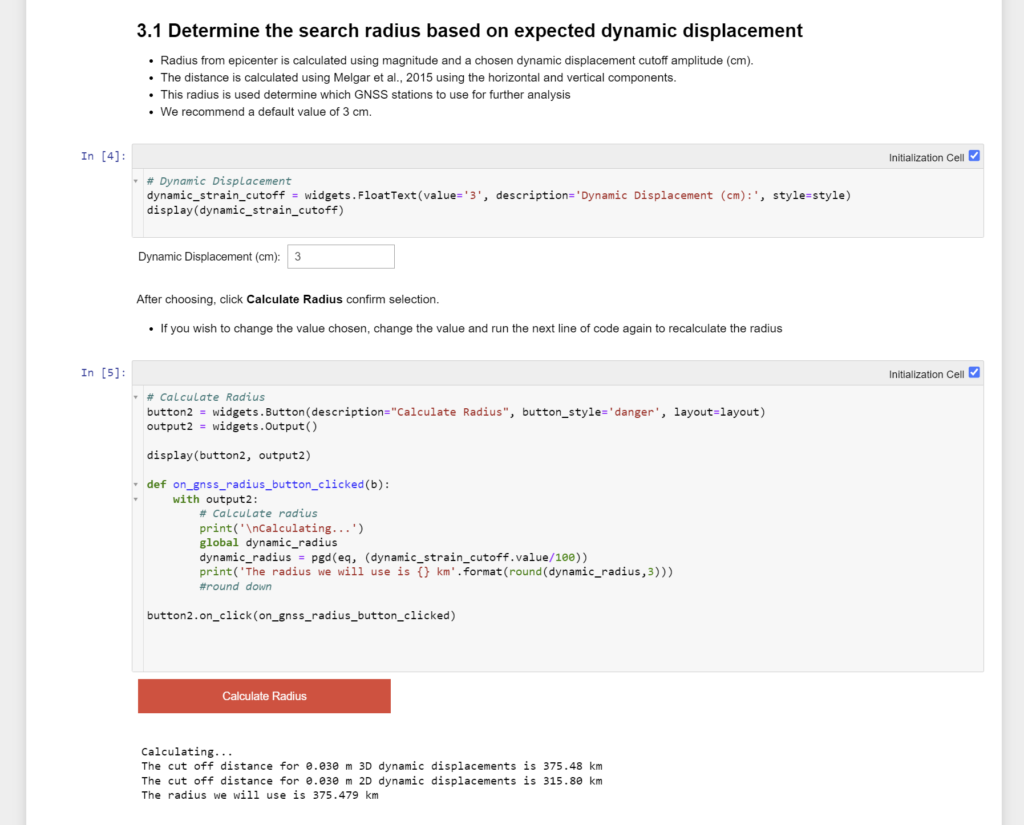

Sections (or “cells”) of a notebook can contain code or they can contain formatted text and other media content. This allows a notebook to contain rich directions, context, explanation, documentation, or anything else useful that you can think of. Notebooks can be much more user friendly and flexible than a script because of this ability to add “narrative” to your software tools.

The code cells in a notebook can be executed independently within a shared environment. Although out-of-order execution may sometimes require some care, this allows you to break your code into documented steps.

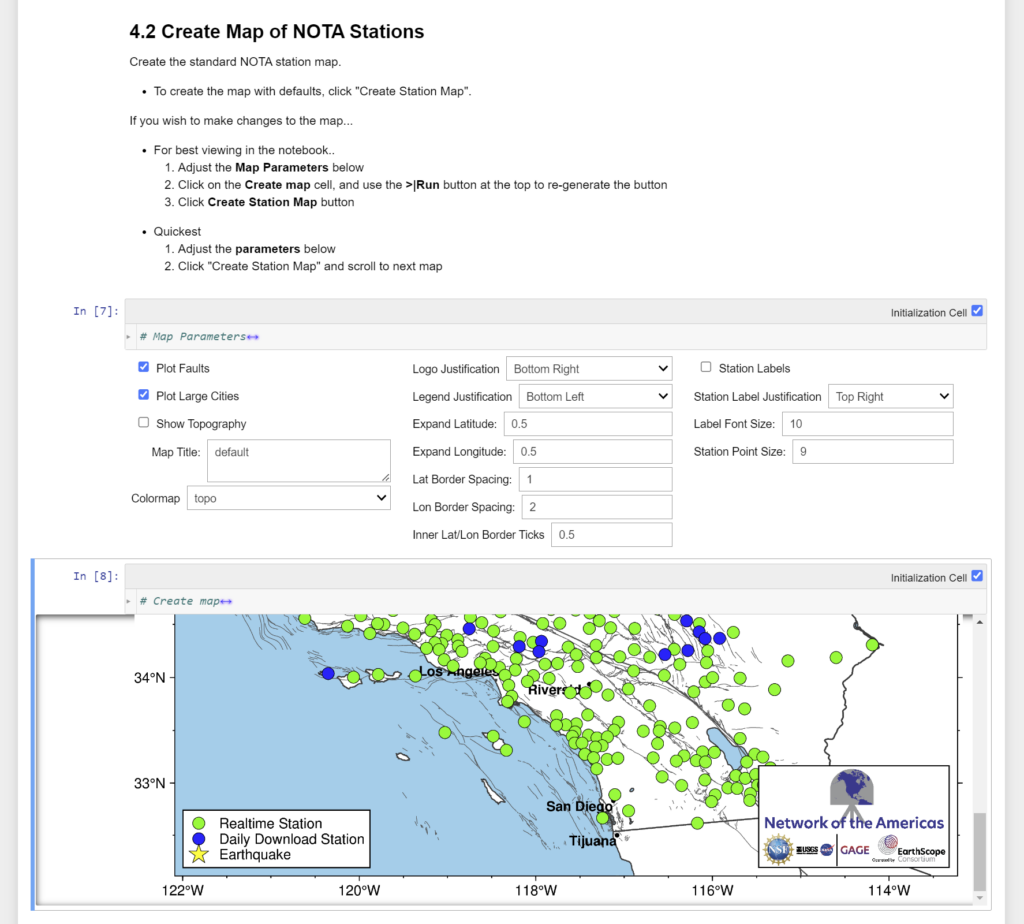

The output of your code — like visualizations or processed results — can also be displayed inside the notebook. This can make a notebook a full-service tool for interacting with and visualizing data for students, for example. Or it can make it an exploratory tool for quickly iterating to understand the shape of a new dataset or technique.

Why use notebooks?

The cofounders of Jupyter have written that “even though Jupyter helps users perform complex, technical work, Jupyter itself solves problems that are fundamentally human in nature. Namely, Jupyter helps humans to think and tell stories with code and data.”

There are a number of use cases that have made notebooks popular in science, specifically. One of these is the ease with which you can share them with others. When hosted on a server, multiple users can engage with them immediately—no setup required—in the same environment. Colleagues can more easily collaborate on a notebook, and students can jump right into that workflow and focus on scientific content and analysis rather than management and configuration of the technical environment.

The NISAR Science Team, for example, has shared data processing algorithms with its community as notebooks prior to launch. Likewise, the open science Pangeo project has been using notebooks to share tools that “allow researchers to access, process, and analyze NASA data in the commercial cloud without having to download the data”.

This simplified sharing also makes notebooks useful for reproducibility and transparency efforts, as you can document your analysis methods in a ready-to-run package. When the Laser Interferometer Gravitational-Wave Observatory (LIGO) team announced the detection of gravitational waves in 2016, there was obvious interest in analyzing and replicating the data analysis. The team published detailed notebooks that served as a tutorial and documentation for their results.

It’s also worth noting that notebooks are more flexible and extensible than you might think at first glance. Notebooks can be turned into static web pages, run by a browser even without a server, or embedded in web pages to power web apps. They can also interact with systems that aren’t notebooks, like web services, software installed in the same environment, or even a distributed computing architecture.

How to get started

You can create Jupyter Notebooks to run on your local computer by installing JupyterLab, or through Microsoft’s Visual Studio Code. Alternatively, you can work with Python Jupyter notebooks hosted by remote servers such as Google Colab or Binder.

There are many beginner-friendly tutorials for these tools (or for coding languages like Python) that will help you quickly get your feet wet, in addition to geophysical notebook collections you could explore once you’re comfortable.

Just as Google Colab runs on Google’s servers, it’s possible to set up JupyterLab on a server or cloud space you control for multiple users to share — a platform known as JupyterHub. Partnering with 2i2c, we’re providing a JupyterHub to run notebooks in our cloud data system that you can access using your EarthScope data login. In addition to being an easy entry point for building notebooks, our hub offers some benefits specific to our community.

Future advantages

As we optimize the data archive for cloud processing, there will be functional advantages to performing data analysis from our notebook hub. Because the hub and the data will exist in the same cloud ecosystem, you’ll be able to leverage server-side processing to run data-intensive workflows extremely efficiently and you won’t have to spend long periods of time waiting for data to download — making it more intuitive and faster to run your analysis.

You’ll be able to take advantage of notebooks’ strengths for exploratory cloud data analysis, visualization, and data storytelling. Beyond that, we will map pathways to more data-intensive cloud computing, so users can migrate their workflows to more script-based development for improved versioning, testing, and scalability as needed.

This notebook hub is also intended to facilitate community collaboration. Technical short courses can simplify and centralize access to tutorial content. (So short courses may double as your introduction to the hub.) It also provides a common location where teams can work together on data analysis methods. And as time goes on, a growing set of curated community tools will be available to everyone. It is our hope that this platform proves to be convenient and extremely useful — particularly for optimizing your work for cloud computing.